The AI revolution isn’t waiting for boardroom approval. Employees across industries are already using personal AI tools like ChatGPT, Gemini, and Claude to streamline workflows, fill skill gaps, and boost productivity. This quiet adoption—known as Shadow AI—is reshaping project-based businesses from the bottom up.

But with stealth innovation comes stealth risk. Shadow AI introduces serious vulnerabilities around data security, compliance, and decision-making integrity. If left unchecked, it could undermine the very growth AI promises to deliver.

What Is Shadow AI?

Shadow AI refers to the use of unauthorized or unvetted AI tools by employees without formal approval or oversight. These tools are often free, easy to access, and highly effective—making them irresistible to workers facing tight deadlines or resource constraints.

Unfortunately, their convenience masks critical risks.

How Shadow AI Emerges

The Adoption Chain

- Tool Discovery: Employees find free AI tools online.

- Personal Use: Tools are used to automate tasks, summarize documents, or generate client-facing content.

- Defiance: Even if banned, 46% of employees say they’d continue using them.

- Risk Exposure: Sensitive data may be processed or stored in unsecured environments.

- Compliance Breach: Organizations lose visibility into how and where data is handled.

Why Shadow AI Is So Dangerous

• Data Leakage: 1 in 5 companies have already experienced data exposure due to generative AI use.

• Compliance Violations: GDPR, HIPAA, and sector-specific regulations may be breached unknowingly.

• Insider Risk: 75% of CISOs now view insiders as a greater threat than external actors.

• Decision-Making Integrity: AI-generated outputs can contain bias, hallucinations, or inaccuracies.

• Loss of Control: Without oversight, organizations can’t track how AI is influencing operations.

Shadow AI Is a Symptom — Not the Disease

The rise of Shadow AI isn’t just a cybersecurity issue. It’s a signal that employees are ready to innovate, but leadership hasn’t provided the tools or guardrails to do so safely. Firms that fail to respond risk falling behind as AI transforms entire industries.

How to Build an AI-Ready Culture

- Establish Clear AI Governance Policies

Define which tools are approved, how they should be used, and what data can be processed.

- Embed AI Into Business Strategy

Don’t treat AI as a side project. Integrate it into core operations and align it with organizational goals. - Create Safe Experimentation Zones

Build internal “playgrounds” where employees can test AI tools without risking compliance or data integrity. - Foster Cross-Department Dialogue

Encourage collaboration between IT, security, and business units to evaluate AI’s risks and benefits. - Invest in AI Literacy & Training

Educate employees on how AI models work, where data goes, and how to validate outputs responsibly.

Need Help Navigating Shadow AI?

AI adoption is accelerating—and so are the risks. At JND Consulting Group, we help organizations:

- Audit and mitigate Shadow AI exposure

- Develop AI governance frameworks that empower innovation

- Train teams on secure, compliant AI usage

- Align AI tools with business strategy and regulatory requirements

Is Your Cloud Data Truly Safe? Why Microsoft 365 and Google Workspace Need Third-Party Backup

Facebook Twitter LinkedIn Is Your Cloud Data Truly Safe? Why Microsoft 365 and Google Workspace Need Third-Party Backup In today’s cloud-first world, most businesses rely

February 2026: Six Actively Exploited Zero‑Days — An Unprecedented Warning for the Industry

Facebook Twitter LinkedIn February 2026: Six Actively Exploited Zero‑Days — An Unprecedented Warning for the Industry Microsoft February 2026 Patch Tuesday just landed, and it’s

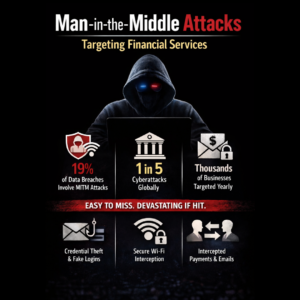

Man-in-the-Middle Attacks: How Financial Services Are Being Silently Hijacked — and How Easy It Is to Be Tricked

Facebook Twitter LinkedIn Man-in-the-Middle Attacks: How Financial Services Are Being Silently Hijacked Financial services organizations invest heavily in cybersecurity, yet one of the most dangerous